In today’s blog post we will be learning a workaround for how to schedule or delay a message using AWS SQS despite its 15 minutes (900 seconds) upper limit.

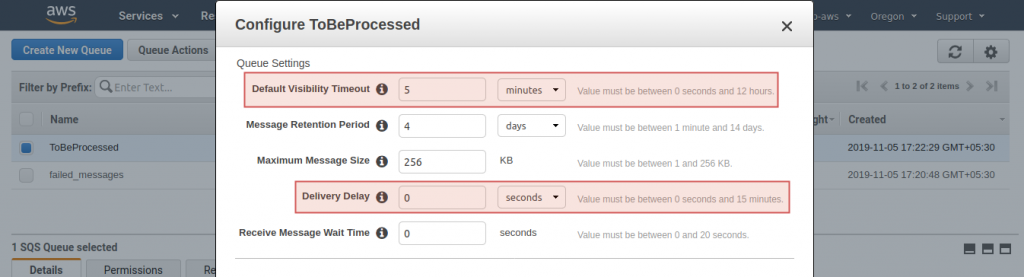

But first let us understand some SQS attributes briefly, firstly Delivery Delay, it lets you specify a delay between 0 and 900 seconds (15 minutes). When set, any message sent to the queue will only become visible to consumers after the configured delay period. Secondly Visibility Timeout, the time that a received message from a queue will be invisible to be received again unless it’s deleted from the queue.

If you want to learn about dead letter queue and deduplication, you could follow my other article: Processing High Volume Big Data Concurrently with No Duplicates using AWS SQS.

So, when a consumer receives a message, the message remains in the queue but is invisible for the duration of its visibility timeout, after which other consumers will be able to see the message. Ideally, the first consumer would handle and delete the message before the visibility timeout expires.

The upper limit for visibility timeout is 12 hours. We could leverage this to schedule/delay a task.

A typical combination would be SQS with Lambda where the invoked function executes the task. Usually, standard queues when enabled with lambda triggers have immediate consumption that means when a message is inserted into the standard queue the lambda function is invoked immediately with the message available in the event object.

Note: If the lambda results in an error the message stays in the queue for further receive requests, otherwise it is deleted.

That said, there could be 2 cases:

- A generic setup that can adapt to a range of time delays.

- A stand-alone setup built to handle only a fixed time delay.

The idea is to insert a message into the queue with task details and time to execute(target time) and have the lambda do the dirty work.

Case1:

The Lambda function checks if target time equals current time, if so execute the task and message is deleted as the lambda executes without error else change the visibility timeout of that message in the queue with delta difference and raise an error leaving the message in the queue.

Case2:

The SQS’s default visibility timeout is configured with the required fixed time delay. The Lambda function checks if the difference of target time and current time equals fixed time delay, if so execute the task and message is deleted as the lambda executes without error else simply raise an error leaving the message untampered back in the queue.

The message is retried after it’s visibility timeout which is the required fixed time delay and is executed.

The problem with this approach is accuracy and scalability.

Here’s the lambda code for case2:

Processor.py

import boto3

import json

from datetime import datetime, timezone

import dateutil.tz

tz = dateutil.tz.gettz('US/Central')

fixed_time_delay = 1 # change this value, be it hour, min, sec

def lambda_handler(event, context):

# TODO implement

message = event['Records'][0]

# print(message)

result = json.loads(message['body'])

task_details = result['task_details']

target_time = result['execute_at']

tt = datetime.strptime(target_time, "%d/%m/%Y, %H:%M %p CST")

print(tt)

t_now = datetime.now(tz)

time_now = t_now.strftime("%d/%m/%Y, %H:%M %p CST")

tn = datetime.strptime(time_now, "%d/%m/%Y, %H:%M %p CST")

print(tn)

delta_time = tn-tt

print(delta_time)

delta_in_whatever = #extract delay in hour, min, sec

if delta_in_whatever == fixed_time_delay:

# execute task logic

print(task_details)

else:

raise eConclusion:

Scheduling tasks using SQS isn’t effective in all scenarios. You could use AWS step function’s wait state to achieve milliseconds accuracy, or Dynamo DB’s TTL feature to build an ad hoc scheduling mechanism, the choice of service used is largely dependent on the requirement. So, here’s a wonderful blog post that gives you a bigger picture of different ways to schedule a task on AWS.

This story is authored by Koushik. Koushik is a software engineer specializing in AWS Cloud Services.

Comments