In one of our earlier posts, we had talked about setting up a data lake using AWS LakeFormation. Once the data lake is setup, we can use Amazon Athena to query data. Athena is an interactive query service that makes it easy to analyze data in Amazon S3 using standard SQL. Athena is serverless, so there is no infrastructure to manage. With Athena, there is no need for complex ETL jobs to prepare data for analysis. Today, we will explore querying the data from a data lake in S3 using Redshift Spectrum. This use case makes sense for those organizations that already have a significant exposure to using Redshift as their primary data warehouse.

Amazon Redshift Spectrum

Amazon Redshift Spectrum is used to efficiently query and retrieve structured and semistructured data from files in Amazon S3 without having to load the data into Amazon Redshift tables. Amazon Redshift Spectrum resides on dedicated Amazon Redshift servers that are independent of your cluster. Redshift Spectrum pushes many compute-intensive tasks, such as predicate filtering and aggregation, down to the Redshift Spectrum layer.

How is Amazon Athena different from Amazon Redshift Spectrum?

- Redshift Spectrum needs an Amazon Redshift cluster and an SQL client that’s connected to the cluster so that we can execute SQL commands. But Athena is serverless.

- In Redshift Spectrum the external tables are read-only, it does not support insert query. Athena supports the insert query which inserts records into S3.

Amazon Redshift cluster

To use Redshift Spectrum, you need an Amazon Redshift cluster and a SQL client that’s connected to your cluster so that you can execute SQL commands. The cluster and the data files in Amazon S3 must be in the same AWS Region.

Redshift cluster needs the authorization to access the external data catalog in AWS Glue or Amazon Athena and the data files in Amazon S3. Let’s kick off the steps required to get the Redshift cluster going.

Create an IAM Role for Amazon Redshift

- Open the IAM console, choose Roles.

- Then choose, Create role.

- Choose AWS service, and then select Redshift.

- Under Select your use case, select Redshift – Customizable and then choose Next: Permissions.

- Then Attach permissions policy page appears. Attach the following policies AmazonS3FullAccess, AWSGlueConsoleFullAccess and AmazonAthenaFullAccess

- For Role name, enter a name for your role, in this case, redshift-spectrum-role.

- Choose Create role.

Create a Sample Amazon Redshift Cluster

- Open the Amazon Redshift console.

- Choose the AWS Region. The cluster and the data files in Amazon S3 must be in the same AWS Region.

- Select CLUSTERS and choose Create cluster.

Cluster Configuration:- Based on the size of data and type of data(compressed/uncompressed), select the nodes.

- Amazon Redshift provides an option to calculate the best configuration of a cluster, based on the requirements. Then choose to Calculate the best configuration for your needs.

- In this case, use dc2.large with 2 nodes.

- Specify Cluster details.

- Cluster identifier: Name-of-the-cluster.

- Database port: Port number 5439 which is the default.

- Master user name: Master user of the DB instance.

- Master user password: Specify the password.

- In the Cluster permissions section, select Available IAM roles and choose the IAM role that was created earlier, redshift-spectrum-role. Then choose the Add IAM role.

- Select Create cluster, wait till the status is Available.

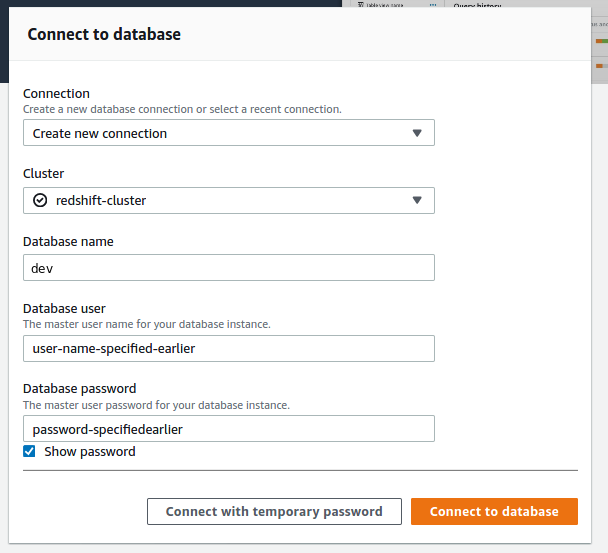

Connect to Database

- Open the Amazon Redshift console and choose EDITOR.

- Database name is dev.

Create an External Schema and an External Table

External tables must be created in an external schema.

- To create an external schema, run the following command. Please replace the iam_role with the role that was created earlier.

create external schema spectrum

from data catalog

database 'spectrumdb'

iam_role 'arn:aws:iam::xxxxxxxxxxxx:role/redshift-spectrum-role'

create external database if not exists;- Copy data using the following command. The data used above is provided by AWS. Configure aws cli on your machine and run this command.

aws s3 cp s3://awssampledbuswest2/tickit/spectrum/sales/ s3://bucket-name/data/source/ --recursive- To create an external table, please run the following command. The table is created in the spectrum.

create external table spectrum.table_name(

salesid integer,

listid integer,

sellerid integer,

buyerid integer,

eventid integer,

dateid smallint,

qtysold smallint,

saletime timestamp)

row format delimited

fields terminated by '\t'

stored as textfile

location 's3://bucket-name/copied-prefix/';Now the table is available in Redshift Spectrum. We can analyze the data using SQL queries like so:

SELECT

*

FROM

spectrum.rs_table

LIMIT 10;Create a Table in Athena using Glue Crawler

In case you are just starting out on the AWS Glue crawler, I have explained how to create one from scratch in one of my earlier articles. In this case, I created the rs_table in spectrumdb database.

Comparison between Amazon Redshift Spectrum and Amazon Athena

I ran some basic queries in Athena and Redshift Spectrum as well. The query elapsed time comparison is as follows. It take about 3 seconds on Athena compared to about 16 seconds on Redshift Spectrum.

The idea behind this post was to get you up and running with a basic data lake on S3 that is queryable on Redshift Spectrum. I hope it was useful.

This story is authored by PV Subbareddy. He is a Big Data Engineer specializing on AWS Big Data Services and Apache Spark Ecosystem.

Comments